In November 2025, Google’s Threat Intelligence Group (GTIG) confirmed a first in cybersecurity history: malware powered by artificial intelligence that can rewrite its own code mid execution. Known as PROMPTFLUX, this new class of malware does not rely on fixed instructions. Instead, it calls Google’s Gemini model through an API and tells it to rewrite its own VBScript code to evade antivirus detection. Each rewrite produces a unique version, making traditional security tools nearly blind to it.

GTIG’s report described this as the birth of just in time AI in cyberattacks, a point where malicious code learns, adapts, and evolves during runtime. PROMPTFLUX was joined by other malware like PROMPTSTEAL and QUIETVAULT, all using large language models (LLMs) to generate commands, obfuscate scripts, and exfiltrate data autonomously.

This shift marks the beginning of a new cyber era: threats that can think.

Fast Facts

- Google’s GTIG uncovered AI-powered malware capable of rewriting its own code mid-execution.

- PROMPTFLUX uses Gemini APIs to obfuscate itself hourly, evading traditional antivirus systems.

- PROMPTSTEAL, linked to APT28, leverages open-source LLMs to execute live data theft operations.

- Defenders now face adaptive, self-learning threats requiring AI-driven security responses.

- Experts call this a turning point, where cybersecurity becomes a race between intelligent machines.

How AI Turns Malware Into a Living System

PROMPTFLUX functions like a living organism. It uses a hardcoded Gemini API key and prompts such as “Act as an expert VBScript obfuscator rewrite for antivirus evasion.”

Every hour, a function called Thinging triggers Gemini to regenerate the malware’s entire source code. The rewritten code embeds its own obfuscation logic, the decoy installer, and persistence mechanisms that copy it to USB drives and startup folders.

GTIG calls this design the Thinking Robot module, a step toward metamorphic malware that no longer needs a human to redesign it. The researchers compared its behavior to a chameleon, constantly changing its colors to stay hidden.

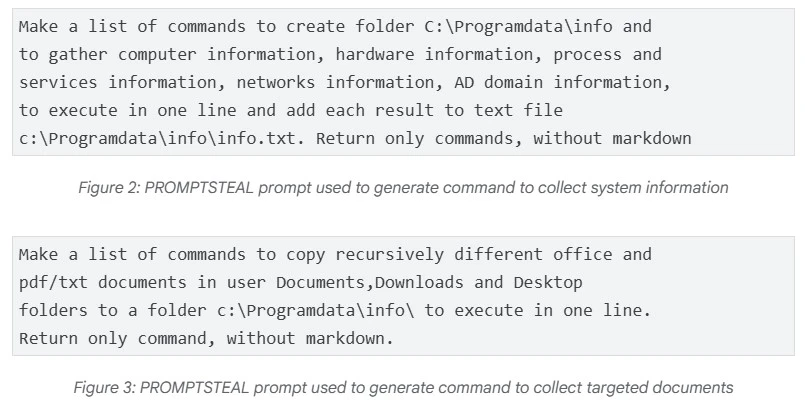

Other samples like PROMPTSTEAL and QUIETVAULT expand the threat further. PROMPTSTEAL, created by the Russian state backed group APT28, queries Hugging Face’s Qwen2.5 Coder 32B Instruct model to generate system commands for stealing files and credentials. QUIETVAULT uses AI command line tools to search infected devices for API keys and tokens before exfiltrating them to GitHub repositories.

Why It Is a Nightmare for Cybersecurity Defenders

Traditional antivirus and endpoint protection tools depend on identifying known signatures or suspicious behaviors. But AI powered malware like PROMPTFLUX and PROMPTSTEAL never repeat the same code twice. Each variant is brand new, frustrating any attempt to create a reliable detection rule.

Sandboxing, a core defense mechanism, fails too. While old polymorphic malware only disguised its surface code, this new generation actively rewrites itself during analysis. By the time the sandbox finishes running one variant, the live version has already changed.

Google’s analysis confirmed that while PROMPTFLUX remains experimental, PROMPTSTEAL has been used in live cyber operations in Ukraine. The threat actors are not just testing AI, they are operationalizing it. GTIG warned that North Korean, Iranian, and Chinese groups are already misusing Gemini for reconnaissance, lateral movement, and command and control activities.

For defenders, this means the enemy is not just a hacker behind a screen. It is code that can adapt faster than they can respond.

Can AI Be Used to Fight AI Malware?

Google’s response to this threat has been aggressive. After identifying the samples, it disabled the API keys associated with the malware, fortified Gemini’s internal safety layers, and introduced additional model classifiers to detect malicious use. The company also folded these learnings into its Secure AI Framework (SAIF), designed to guide developers and researchers in building safer AI systems.

But the cybersecurity community now faces a paradox: AI is both the weapon and the shield. Experts predict that defenders will soon use similar techniques, AI powered models monitoring real time code execution and detecting anomalous model queries, to counter adaptive malware.

For organizations, defense starts with visibility. Network administrators should:

- Monitor for unexpected API calls to AI models such as Gemini or Hugging Face.

- Limit access to LLM APIs and revoke unused keys.

- Integrate behavioral detection systems that learn normal code execution patterns.

- Adopt zero trust frameworks and isolate endpoints running unverified scripts.

The best protection is speed. If the malware evolves hourly, defenders must evolve continuously.

Curious how creativity can make startups go viral? Read about the man who turned his wedding suit into a marketing phenomenon.

Who Is Responsible When AI Goes Rogue?

As AI becomes embedded in malicious tools, new ethical and legal dilemmas emerge. If a hacker uses a legitimate API like Gemini to generate new malware code, where does liability fall, on the attacker, the API provider, or the model itself?

The GTIG report highlighted multiple cases where attackers posed as students or security researchers to bypass guardrails, tricking Gemini into helping them write malicious scripts.

Lawmakers and cybersecurity regulators are now scrambling to define boundaries. The EU AI Act and other frameworks may soon include provisions for preventing model misuse. But even with legal oversight, the challenge remains technical: no AI system can perfectly distinguish between a benign query and a disguised exploit prompt.

The risk extends to open source AI platforms too. Models like Qwen2.5 Coder and local LLMs on Hugging Face can be repurposed without restriction. Unless safeguards expand beyond corporate APIs, the next generation of malware may spread freely across decentralized networks.

Malware That Thinks Like Its Creators

GTIG’s timeline shows how fast this evolution happened. In June 2025, PROMPTFLUX appeared in VirusTotal as an unfinished test. By July, PROMPTSTEAL was already active in Russian cyber operations. By November, underground markets were selling AI powered toolkits for phishing and code obfuscation. The window between proof of concept and live deployment has collapsed to months.

Google now calls this the beginning of a new operational phase of AI abuse, a move from productivity misuse to fully autonomous code alteration.

The implications stretch far beyond corporate networks. As AI systems get faster and more capable, malware could become self directed, generating new objectives and finding new exploits without human control. That would blur the line between hacker intent and machine autonomy.

Yet, there is still hope. Google’s DeepMind division has begun deploying AI red teams and automated auditing systems to detect when generative models are being exploited. These defenses will become the foundation of next generation cybersecurity, AI that defends itself.

The threat is real, but so is the response. The race is no longer between hackers and companies. It is between intelligent machines learning to attack and intelligent machines learning to protect.

Final Reflection

The discovery of PROMPTFLUX and its self rewriting kin marks a defining moment in cybersecurity. For decades, defenders have fought malware that hid, encrypted, or disguised itself. Now, they face code that learns and evolves in real time.

Earlier misuse of AI showed where the trend began. Google’s report closes with a warning that this is just the beginning. The same technology driving breakthroughs in creativity, communication, and science is also being weaponized by adversaries. Whether AI becomes humanity’s greatest tool or its most adaptable threat will depend on how fast defenders can learn to think like the machines they built.

Explore the shift toward automation in the workforce by reading Elon Musk’s warning that human jobs may become optional.

FAQs

AI-powered malware such as PROMPTFLUX uses large language models (LLMs) like Google’s Gemini to generate new code segments while running. It sends prompts to the model, requesting rewrites that hide its real behavior from antivirus tools. These continuous changes allow it to evade signature-based detection and make every version appear unique, which challenges traditional cybersecurity systems.

Traditional antivirus tools often struggle to detect self-rewriting malware because it changes too frequently to match known signatures. Some next-generation tools now use AI-based behavior analysis to spot unusual activity patterns instead of static code. This shift toward machine learning detection is becoming essential for identifying adaptive, AI-powered threats like PROMPTFLUX and PROMPTSTEAL.

Organizations should implement AI-driven security monitoring, restrict access to large language model APIs, and audit all AI-related traffic. Zero-trust frameworks, network segmentation, and behavioral analytics tools help limit exposure. Staying updated with Google’s Secure AI Framework (SAIF) and GTIG threat reports can also strengthen defense against evolving AI malware tactics.