In Hong Kong, a 17-year-old student named Chow Sze-lok set out to solve a problem that haunts parents everywhere. She asked a simple but powerful question: What if AI could protect children when adults fail to notice the signs of abuse?

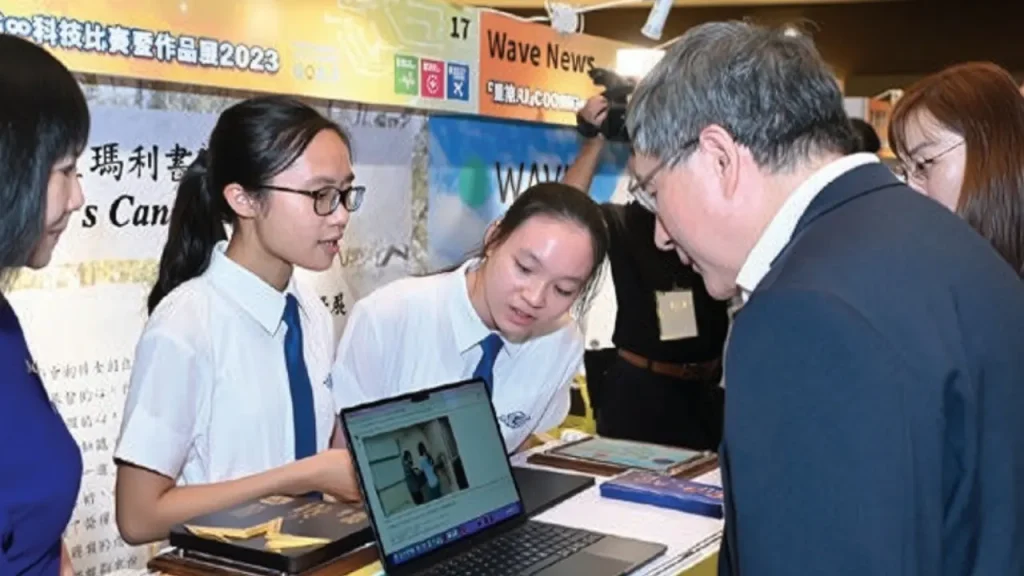

Chow and her school team at St. Mary’s Canossian College built KidAID, an AI-powered system designed to detect child abuse in daycare centers. Unlike regular CCTV cameras that only record, KidAID watches footage in real time. It identifies unusual movements or troubling interactions and flags them for review.

“I wanted to create something that enables us to see what a human being can often overlook, so that we can take care of those children who cannot defend themselves.”

— Chow Sze-lok (Edinbox)

What makes this even more remarkable is the fact that the project was developed in just six months using nothing more than an aging school laptop.

Fast Facts

- Teen Innovator: Chow Sze-lok, 17, created KidAID in Hong Kong.

- Purpose: AI system designed to detect signs of daycare abuse.

- Development: Built in six months on an old school laptop.

- Method: Trained using simulated scenarios instead of real footage.

- Recognition: Awarded at the Geneva International Exhibition of Inventions.

Why Daycare Abuse Often Goes Undetected

Parents want to believe their children are safe in daycare. Sadly, abuse cases prove that is not always the truth. Globally, the World Health Organization estimates that 1 in 5 women and 1 in 13 men experience childhood sexual abuse, with some cases happening in institutional settings.

In the U.S., a 2019 study found that 1 in 11 child abuse reports involved childcare facilities. In Hong Kong alone, more than 1,200 abuse cases were reported in 2023, with a portion linked to daycare and school settings.

The challenge is that young children often cannot explain what happened. Parents may notice bruises or changes in behavior, but these signs are easy to miss. Even with CCTV in many daycare centers, footage must be reviewed manually, which takes time and often results in overlooked incidents.

This is where Chow’s idea becomes powerful. Instead of relying only on human review, AI can analyze footage 24/7 without getting tired or distracted.

How KidAID Works

KidAID uses machine learning to analyze CCTV video. It tracks body movements across frames and looks for patterns that may signal abuse, such as aggressive gestures, prolonged isolation, or distress. If audio is included, it could even flag raised voices or signs of crying.

Because using real footage of children was not possible due to privacy laws, Chow and her team filmed simulated daycare scenarios at school. Students acted out interactions, both normal and harmful, to give the AI examples of what to detect.

The system was built on open-source tools like Python, TensorFlow, and OpenCV. While powerful in concept, the limitations are clear. A single school laptop can only train small datasets, and accuracy can suffer. In fact, researchers warn that even highly accurate models can misinterpret playful roughhousing as abuse.

Still, KidAID shows how even with limited resources, a focused student project can push boundaries and reveal what is possible for the future.

Curious about other teen AI innovators? Read more about the 14-year-old who built an AI app for heart disease →

The Hard Questions: Ethics, Accuracy, and Privacy

KidAID sparks excitement, but also raises questions that cannot be ignored.

Accuracy Concerns: AI can detect patterns, but it lacks context. A raised hand might signal abuse or simply a caregiver helping a child up. False positives could create unnecessary fear, while false negatives might miss real abuse. This is why experts insist AI should support, not replace, human oversight.

Ethics: Filming children brings up serious concerns. Parents may not be comfortable with their kids being monitored by an algorithm. In Hong Kong, the Personal Data Privacy Ordinance requires parental consent and secure data storage for CCTV in child-focused facilities.

Bias Risks: If KidAID was trained on limited or staged scenarios, it may not perform well across diverse cultures or environments. Behaviors considered “abnormal” in one setting may be normal in another.

The safest path forward is using AI as a partner to caregivers. It can raise red flags, but trained staff and parents must always make the final judgment.

Recognition, Public Reaction, and the Bigger Picture

Despite its experimental stage, KidAID has already gained attention. Chow reportedly received recognition at the Geneva International Exhibition of Inventions, earning a bronze medal for her innovative work. She also placed second in the SCMP Student of the Year awards in science and math.

Experts who reviewed the project noted that while KidAID faces limitations, its social impact potential is enormous. If developed further, it could complement existing surveillance systems with a focus on child safety.

“An innovative youth has left the world in incredulity with the invention of an AI tool which identifies child abuse in child care centers, bringing hope and protection to areas where it is common for silent victims to go unnoticed.”

— Edinbox (edinbox.com)

Public reaction has been overwhelmingly supportive. On platforms like Reddit and Facebook, users praised Chow’s determination, often highlighting that she achieved this using nothing more than a failing school laptop.

“17-Year-Old Builds AI to Detect Child Abuse in Daycares… made on just an old laptop.”

— Reddit community reaction (reddit.com)

This mix of admiration and curiosity makes KidAID a story that resonates far beyond Hong Kong.

What This Means for Parents, and the Future of AI in Childcare

For parents, KidAID is a reminder that technology is moving closer to solving real-world problems. But while we wait for AI systems like this to mature, there are steps families can take today:

- Check Daycares Carefully: Ask about staff qualifications, background checks, and safety records.

- Understand Monitoring Policies: Some daycares allow parents to access live CCTV feeds through apps.

- Watch for Warning Signs: Sudden fear, unexplained injuries, or changes in sleep patterns should not be ignored.

- Use Digital Tools: Safety apps like Life360 or parental control tools can offer peace of mind.

- Advocate: Push for better safety protocols and transparency in child care facilities.

Looking forward, many experts believe AI surveillance will become common in daycares by 2030. Costs are dropping, and demand for accountability is rising. If KidAID or similar systems evolve, they could offer real-time protection that was unimaginable just a few years ago.

Chow’s story is also part of a bigger trend: young innovators using AI for social good. From detecting online child exploitation to building apps that protect kids during emergencies, technology is being reshaped not just by corporations but by determined individuals with a vision.

For guidance on how to develop AI responsibly in children’s environments, see UNICEF’s Policy Guidance on AI for Children.

Want to see how AI is reshaping governments? Read how Albania plans to fight corruption with AI →

Final Thoughts: A Laptop, Six Months, and a Mission to Protect Kids

Chow Sze-lok’s project started as a response to heartbreaking stories of daycare abuse. It grew into KidAID, a system that shows how AI could one day help protect the most vulnerable members of society.

Her journey is more than a science project. It is a story of empathy, innovation, and courage. It shows that even with limited tools, young people can create technology that sparks global conversations.

For parents, educators, and policymakers, KidAID raises difficult but necessary questions. How do we balance safety with privacy? How do we make sure AI helps without replacing human care? And most importantly, how do we protect children before tragedy strikes?

The answers are still forming, but one thing is clear: a teenager with a vision has already changed the way we think about child safety.

FAQs

No. KidAID remains a student-led project developed in a school environment using simulated footage. While it has received recognition at innovation competitions, it has not been deployed in actual daycare centers. For real-world adoption, it would need rigorous testing, partnerships with child protection organizations, and compliance with privacy laws.

AI cannot replace human caregivers or supervisors. Systems like KidAID are designed to assist by monitoring patterns continuously and raising alerts. Human judgment is essential to interpret AI findings, provide context, and ensure children’s well-being. Experts recommend using AI as a support tool rather than a replacement.

Strict safeguards must be in place, including parental consent, encrypted data storage, and limited access to recordings. Regulations such as Hong Kong’s Personal Data Privacy Ordinance and the EU’s GDPR highlight that children’s data requires the highest level of protection. Without these measures, AI monitoring could create new risks rather than solve existing ones.

Most commercial surveillance systems like Verkada or Avigilon focus on general security issues such as theft or intrusion. KidAID is unique because it was designed specifically to detect subtle abuse-related behaviors in childcare settings. However, commercial systems benefit from larger datasets and more powerful infrastructure, while KidAID is still experimental and resource-limited.